Python is a popular tool for implementing web scraping. Python programming language is also used for other useful projects related to cyber security, penetration testing as well as digital forensic applications. Using the base programming of Python, web scraping can be performed without using any other third party tool. Python programming.

- Python web data scraping gives you the ease of scraping to build brand intelligence and study customer reviews on your product or service.

- Sep 28, 2017 Crawling, scraping, processing, and cleaning data is a necessary activity for a whole host of activities from mapping a website's structure to collecting data that's in a web-only format, or perhaps, locked away in a proprietary database.

- Loading Web Pages with 'request' The requests module allows you to send HTTP.

- Storing the results of Web Scraping into Database. Ask Question Asked 2 years, 5 months ago. Active 2 years, 5 months ago. Viewed 3k times 5. I have written a code for web scraping using python. The code extracts data of Macbook from amazon using selenium.

Once you’ve put together enough web scrapers, you start to feel like you can do it in your sleep. I’ve probably built hundreds of scrapers over the years for my own projects, as well as for clients and students in my web scraping course.

Occasionally though, I find myself referencing documentation or re-reading old code looking for snippets I can reuse. One of the students in my course suggested I put together a “cheat sheet” of commonly used code snippets and patterns for easy reference.

I decided to publish it publicly as well – as an organized set of easy-to-reference notes – in case they’re helpful to others.

While it’s written primarily for people who are new to programming, I also hope that it’ll be helpful to those who already have a background in software or python, but who are looking to learn some web scraping fundamentals and concepts.

Table of Contents:

- Extracting Content from HTML

- Storing Your Data

- More Advanced Topics

Useful Libraries

For the most part, a scraping program deals with making HTTP requests and parsing HTML responses.

I always make sure I have requests and BeautifulSoup installed before I begin a new scraping project. From the command line:

Then, at the top of your .py file, make sure you’ve imported these libraries correctly.

Making Simple Requests

Make a simple GET request (just fetching a page)

Make a POST requests (usually used when sending information to the server like submitting a form)

Pass query arguments aka URL parameters (usually used when making a search query or paging through results)

Inspecting the Response

See what response code the server sent back (useful for detecting 4XX or 5XX errors)

Access the full response as text (get the HTML of the page in a big string)

Look for a specific substring of text within the response

Check the response’s Content Type (see if you got back HTML, JSON, XML, etc)

Extracting Content from HTML

Now that you’ve made your HTTP request and gotten some HTML content, it’s time to parse it so that you can extract the values you’re looking for.

Using Regular Expressions

Using Regular Expressions to look for HTML patterns is famously NOT recommended at all.

However, regular expressions are still useful for finding specific string patterns like prices, email addresses or phone numbers.

Run a regular expression on the response text to look for specific string patterns:

Using BeautifulSoup

BeautifulSoup is widely used due to its simple API and its powerful extraction capabilities. It has many different parser options that allow it to understand even the most poorly written HTML pages – and the default one works great.

Compared to libraries that offer similar functionality, it’s a pleasure to use. To get started, you’ll have to turn the HTML text that you got in the response into a nested, DOM-like structure that you can traverse and search

Look for all anchor tags on the page (useful if you’re building a crawler and need to find the next pages to visit)

Look for all tags with a specific class attribute (eg <li>...</li>)

Look for the tag with a specific ID attribute (eg: <div>...</div>)

Look for nested patterns of tags (useful for finding generic elements, but only within a specific section of the page)

Look for all tags matching CSS selectors (similar query to the last one, but might be easier to write for someone who knows CSS)

Get a list of strings representing the inner contents of a tag (this includes both the text nodes as well as the text representation of any other nested HTML tags within)

Return only the text contents within this tag, but ignore the text representation of other HTML tags (useful for stripping our pesky <span>, <strong>, <i>, or other inline tags that might show up sometimes)

Convert the text that are extracting from unicode to ascii if you’re having issues printing it to the console or writing it to files

Get the attribute of a tag (useful for grabbing the src attribute of an <img> tag or the href attribute of an <a> tag)

Putting several of these concepts together, here’s a common idiom: iterating over a bunch of container tags and pull out content from each of them

Using XPath Selectors

BeautifulSoup doesn’t currently support XPath selectors, and I’ve found them to be really terse and more of a pain than they’re worth. I haven’t found a pattern I couldn’t parse using the above methods.

If you’re really dedicated to using them for some reason, you can use the lxml library instead of BeautifulSoup, as described here.

Storing Your Data

Now that you’ve extracted your data from the page, it’s time to save it somewhere.

Note: The implication in these examples is that the scraper went out and collected all of the items, and then waited until the very end to iterate over all of them and write them to a spreadsheet or database.

I did this to simplify the code examples. In practice, you’d want to store the values you extract from each page as you go, so that you don’t lose all of your progress if you hit an exception towards the end of your scrape and have to go back and re-scrape every page.

Writing to a CSV

Probably the most basic thing you can do is write your extracted items to a CSV file. By default, each row that is passed to the csv.writer object to be written has to be a python list.

Python Web Scraping Tutorial

In order for the spreadsheet to make sense and have consistent columns, you need to make sure all of the items that you’ve extracted have their properties in the same order. This isn’t usually a problem if the lists are created consistently.

If you’re extracting lots of properties about each item, sometimes it’s more useful to store the item as a python dict instead of having to remember the order of columns within a row. The csv module has a handy DictWriter that keeps track of which column is for writing which dict key.

Writing to a SQLite Database

Python Web Scraping To Database Entry

You can also use a simple SQL insert if you’d prefer to store your data in a database for later querying and retrieval.

More Advanced Topics

These aren’t really things you’ll need if you’re building a simple, small scale scraper for 90% of websites. But they’re useful tricks to keep up your sleeve.

Javascript Heavy Websites

Contrary to popular belief, you do not need any special tools to scrape websites that load their content via Javascript. In order for the information to get from their server and show up on a page in your browser, that information had to have been returned in an HTTP response somewhere.

It usually means that you won’t be making an HTTP request to the page’s URL that you see at the top of your browser window, but instead you’ll need to find the URL of the AJAX request that’s going on in the background to fetch the data from the server and load it into the page.

There’s not really an easy code snippet I can show here, but if you open the Chrome or Firefox Developer Tools, you can load the page, go to the “Network” tab and then look through the all of the requests that are being sent in the background to find the one that’s returning the data you’re looking for. Start by filtering the requests to only XHR or JS to make this easier.

Once you find the AJAX request that returns the data you’re hoping to scrape, then you can make your scraper send requests to this URL, instead of to the parent page’s URL. If you’re lucky, the response will be encoded with JSON which is even easier to parse than HTML.

Content Inside Iframes

This is another topic that causes a lot of hand wringing for no reason. Sometimes the page you’re trying to scrape doesn’t actually contain the data in its HTML, but instead it loads the data inside an iframe.

Again, it’s just a matter of making the request to the right URL to get the data back that you want. Make a request to the outer page, find the iframe, and then make another HTTP request to the iframe’s src attribute.

Sessions and Cookies

While HTTP is stateless, sometimes you want to use cookies to identify yourself consistently across requests to the site you’re scraping.

The most common example of this is needing to login to a site in order to access protected pages. Without the correct cookies sent, a request to the URL will likely be redirected to a login form or presented with an error response.

However, once you successfully login, a session cookie is set that identifies who you are to the website. As long as future requests send this cookie along, the site knows who you are and what you have access to.

Delays and Backing Off

If you want to be polite and not overwhelm the target site you’re scraping, you can introduce an intentional delay or lag in your scraper to slow it down

Some also recommend adding a backoff that’s proportional to how long the site took to respond to your request. That way if the site gets overwhelmed and starts to slow down, your code will automatically back off.

Spoofing the User Agent

By default, the requests library sets the User-Agent header on each request to something like “python-requests/2.12.4”. You might want to change it to identify your web scraper, perhaps providing a contact email address so that an admin from the target website can reach out if they see you in their logs.

More commonly, this is used to make it appear that the request is coming from a normal web browser, and not a web scraping program.

Using Proxy Servers

Even if you spoof your User Agent, the site you are scraping can still see your IP address, since they have to know where to send the response.

If you’d like to obfuscate where the request is coming from, you can use a proxy server in between you and the target site. The scraped site will see the request coming from that server instead of your actual scraping machine.

If you’d like to make your requests appear to be spread out across many IP addresses, then you’ll need access to many different proxy servers. You can keep track of them in a list and then have your scraping program simply go down the list, picking off the next one for each new request, so that the proxy servers get even rotation.

Scrapy

Setting Timeouts

If you’re experiencing slow connections and would prefer that your scraper moved on to something else, you can specify a timeout on your requests.

Handling Network Errors

Just as you should never trust user input in web applications, you shouldn’t trust the network to behave well on large web scraping projects. Eventually you’ll hit closed connections, SSL errors or other intermittent failures.

Learn More

If you’d like to learn more about web scraping, I currently have an ebook and online course that I offer, as well as a free sandbox website that’s designed to be easy for beginners to scrape.

You can also subscribe to my blog to get emailed when I release new articles.

What is Web Scraping?

Web Scraping is a technique to extract a large amount of data from several websites. The term 'scraping' refers to obtaining the information from another source (webpages) and saving it into a local file. For example: Suppose you are working on a project called 'Phone comparing website,' where you require the price of mobile phones, ratings, and model names to make comparisons between the different mobile phones. If you collect these details by checking various sites, it will take much time. In that case, web scrapping plays an important role where by writing a few lines of code you can get the desired results.

Web Scrapping extracts the data from websites in the unstructured format. It helps to collect these unstructured data and convert it in a structured form.

Startups prefer web scrapping because it is a cheap and effective way to get a large amount of data without any partnership with the data selling company.

Is Web Scrapping legal?

Here the question arises whether the web scrapping is legal or not. The answer is that some sites allow it when used legally. Web scraping is just a tool you can use it in the right way or wrong way.

Web scrapping is illegal if someone tries to scrap the nonpublic data. Nonpublic data is not reachable to everyone; if you try to extract such data then it is a violation of the legal term.

There are several tools available to scrap data from websites, such as:

- Scrapping-bot

- Scrapper API

- Octoparse

- Import.io

- Webhose.io

- Dexi.io

- Outwit

- Diffbot

- Content Grabber

- Mozenda

- Web Scrapper Chrome Extension

Why Web Scrapping?

As we have discussed above, web scrapping is used to extract the data from websites. But we should know how to use that raw data. That raw data can be used in various fields. Let's have a look at the usage of web scrapping:

- Dynamic Price Monitoring

It is widely used to collect data from several online shopping sites and compare the prices of products and make profitable pricing decisions. Price monitoring using web scrapped data gives the ability to the companies to know the market condition and facilitate dynamic pricing. It ensures the companies they always outrank others.

- Market Research

eb Scrapping is perfectly appropriate for market trend analysis. It is gaining insights into a particular market. The large organization requires a great deal of data, and web scrapping provides the data with a guaranteed level of reliability and accuracy.

- Email Gathering

Many companies use personals e-mail data for email marketing. They can target the specific audience for their marketing.

- News and Content Monitoring

A single news cycle can create an outstanding effect or a genuine threat to your business. If your company depends on the news analysis of an organization, it frequently appears in the news. So web scraping provides the ultimate solution to monitoring and parsing the most critical stories. News articles and social media platform can directly influence the stock market.

- Social Media Scrapping

Web Scrapping plays an essential role in extracting data from social media websites such as Twitter, Facebook, and Instagram, to find the trending topics.

- Research and Development

The large set of data such as general information, statistics, and temperature is scrapped from websites, which is analyzed and used to carry out surveys or research and development.

Why use Python for Web Scrapping?

There are other popular programming languages, but why we choose the Python over other programming languages for web scraping? Below we are describing a list of Python's features that make the most useful programming language for web scrapping.

- Dynamically Typed

In Python, we don't need to define data types for variables; we can directly use the variable wherever it requires. It saves time and makes a task faster. Python defines its classes to identify the data type of variable.

- Vast collection of libraries

Python comes with an extensive range of libraries such as NumPy, Matplotlib, Pandas, Scipy, etc., that provide flexibility to work with various purposes. It is suited for almost every emerging field and also for web scrapping for extracting data and do manipulation.

- Less Code

The purpose of the web scrapping is to save time. But what if you spend more time in writing the code? That's why we use Python, as it can perform a task in a few lines of code.

- Open-Source Community

Python is open-source, which means it is freely available for everyone. It has one of the biggest communities across the world where you can seek help if you get stuck anywhere in Python code.

The basics of web scraping

The web scrapping consists of two parts: a web crawler and a web scraper. In simple words, the web crawler is a horse, and the scrapper is the chariot. The crawler leads the scrapper and extracts the requested data. Let's understand about these two components of web scrapping:

- The crawler

A web crawler is generally called a 'spider.' It is an artificial intelligence technology that browses the internet to index and searches for the content by given links. It searches for the relevant information asked by the programmer.

A web scraper is a dedicated tool that is designed to extract the data from several websites quickly and effectively. Web scrappers vary widely in design and complexity, depending on the projects.

How does Web Scrapping work?

These are the following steps to perform web scraping. Let's understand the working of web scraping.

Step -1: Find the URL that you want to scrape

First, you should understand the requirement of data according to your project. A webpage or website contains a large amount of information. That's why scrap only relevant information. In simple words, the developer should be familiar with the data requirement.

Step - 2: Inspecting the Page

The data is extracted in raw HTML format, which must be carefully parsed and reduce the noise from the raw data. In some cases, data can be simple as name and address or as complex as high dimensional weather and stock market data.

Step - 3: Write the code

Write a code to extract the information, provide relevant information, and run the code.

Step - 4: Store the data in the file

Store that information in required csv, xml, JSON file format.

Getting Started with Web Scrapping

Python has a vast collection of libraries and also provides a very useful library for web scrapping. Let's understand the required library for Python.

Library used for web scrapping

- Selenium- Selenium is an open-source automated testing library. It is used to check browser activities. To install this library, type the following command in your terminal.

Note - It is good to use the PyCharm IDE.

- Pandas

Pandas library is used for data manipulation and analysis. It is used to extract the data and store it in the desired format.

- BeautifulSoup

Let's understand the BeautifulSoup library in detail.

Installation of BeautifulSoup

You can install BeautifulSoup by typing the following command:

Installing a parser

BeautifulSoup supports HTML parser and several third-party Python parsers. You can install any of them according to your dependency. The list of BeautifulSoup's parsers is the following:

| Parser | Typical usage |

|---|---|

| Python's html.parser | BeautifulSoup(markup,'html.parser') |

| lxml's HTML parser | BeautifulSoup(markup,'lxml') |

| lxml's XML parser | BeautifulSoup(markup,'lxml-xml') |

| Html5lib | BeautifulSoup(markup,'html5lib') |

We recommend you to install html5lib parser because it is much suitable for the newer version of Python, or you can install lxml parser.

Type the following command in your terminal:

BeautifulSoup is used to transform a complex HTML document into a complex tree of Python objects. But there are a few essential types object which are mostly used:

- Tag

A Tag object corresponds to an XML or HTML original document.

Output:

Tag contains lot of attributes and methods, but most important features of a tag are name and attribute.

- Name

Every tag has a name, accessible as .name:

- Attributes

A tag may have any number of attributes. The tag <b id = 'boldest'> has an attribute 'id' whose value is 'boldest'. We can access a tag's attributes by treating the tag as dictionary.

We can add, remove, and modify a tag's attributes. It can be done by using tag as dictionary.

- Multi-valued Attributes

In HTML5, there are some attributes that can have multiple values. The class (consists more than one css) is the most common multivalued attributes. Other attributes are rel, rev, accept-charset, headers, and accesskey.

- NavigableString

A string in BeautifulSoup refers text within a tag. BeautifulSoup uses the NavigableString class to contain these bits of text.

A string is immutable means it can't be edited. But it can be replaced with another string using replace_with().

In some cases, if you want to use a NavigableString outside the BeautifulSoup, the unicode() helps it to turn into normal Python Unicode string.

- BeautifulSoup object

The BeautifulSoup object represents the complete parsed document as a whole. In many cases, we can use it as a Tag object. It means it supports most of the methods described in navigating the tree and searching the tree.

Output:

Web Scrapping Example:

Let's take an example to understand the scrapping practically by extracting the data from the webpage and inspecting the whole page.

First, open your favorite page on Wikipedia and inspect the whole page, and before extracting data from the webpage, you should ensure your requirement. Consider the following code:

Output:

In the following lines of code, we are extracting all headings of a webpage by class name. Here front-end knowledge plays an essential role in inspecting the webpage.

Output:

In the above code, we imported the bs4 and requested the library. In the third line, we created a res object to send a request to the webpage. As you can observe that we have extracted all heading from the webpage.

Webpage of Wikipedia Learning

Let's understand another example; we will make a GET request to the URL and create a parse Tree object (soup) with the use of BeautifulSoup and Python built-in 'html5lib' parser.

Here we will scrap the webpage of given link (https://www.javatpoint.com/). Consider the following code:

The above code will display the all html code of javatpoint homepage.

Using the BeautifulSoup object, i.e. soup, we can collect the required data table. Let's print some interesting information using the soup object:

- Let's print the title of the web page.

Output: It will give an output as follow:

- In the above output, the HTML tag is included with the title. If you want text without tag, you can use the following code:

Output: It will give an output as follow:

- We can get the entire link on the page along with its attributes, such as href, title, and its inner Text. Consider the following code:

Output: It will print all links along with its attributes. Here we display a few of them:

Python Web Scraping Library

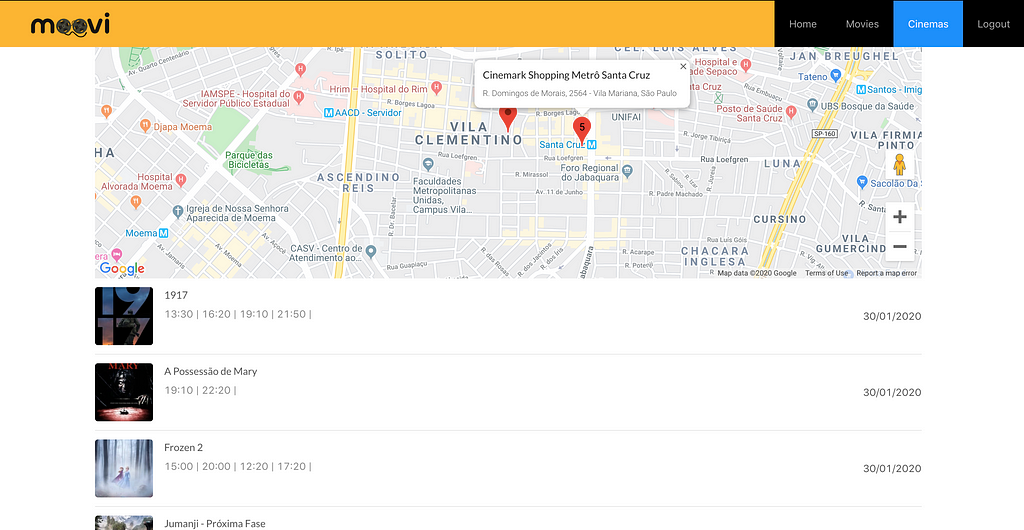

Demo: Scraping Data from Flipkart Website

In this example, we will scrap the mobile phone prices, ratings, and model name from Flipkart, which is one of the popular e-commerce websites. Following are the prerequisites to accomplish this task:

Prerequisites:

- Python 2.x or Python 3.x with Selenium, BeautifulSoup, Pandas libraries installed.

- Google - chrome browser

- Scrapping Parser such as html.parser, xlml, etc.

Step - 1: Find the desired URL to scrap

The initial step is to find the URL that you want to scrap. Here we are extracting mobile phone details from the flipkart. The URL of this page is https://www.flipkart.com/search?q=iphones&otracker=search&otracker1=search&marketplace=FLIPKART&as-show=on&as=off.

Step -2: Inspecting the page

It is necessary to inspect the page carefully because the data is usually contained within the tags. So we need to inspect to select the desired tag. To inspect the page, right-click on the element and click 'inspect'.

Step - 3: Find the data for extracting

Extract the Price, Name, and Rating, which are contained in the 'div' tag, respectively.

Step - 4: Write the Code

Output:

We scrapped the details of the iPhone and saved those details in the CSV file as you can see in the output. In the above code, we put a comment on the few lines of code for testing purpose. You can remove those comments and observe the output.

In this tutorial, we have discussed all basic concepts of web scrapping and described the sample scrapping from the leading online ecommerce site flipkart.